- ChatGPT is an AI chatbot built on large language models, using transformer architecture to generate human-like conversations, answer questions, and perform creative tasks.

- Newer versions like GPT-4o and o1-preview bring multimodality and reasoning, enabling ChatGPT to process images, sound, and complex step-by-step logic for better accuracy.

- ChatGPT powers many real-world use cases, from coding help and content creation to customer support and lead generation, making it versatile for individuals and enterprises alike.

ChatGPT made tsunami-sized waves when it first dropped to the public in 2022. Since then, it’s been at the forefront of news headlines, changing laws, and an evolving workforce.

OpenAI’s GPT chatbot is consistently ranked at the top of the best AI chatbots. But what is it, really?

What is ChatGPT?

ChatGPT is an artificial intelligence chatbot powered by a large language model (LLM) and developed by OpenAI.

It uses machine learning and natural language processing (NLP) to understand input and provide relevant output – just like a human conversation.

How does ChatGPT work?

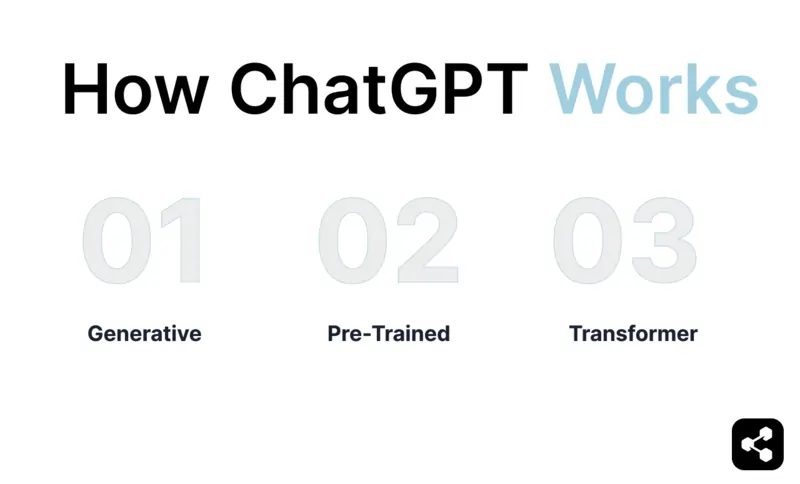

The GPT of ChatGPT stands for generative pre-trained transformer. Each of these 3 elements is key to understanding how ChatGPT works.

Generative

ChatGPT is a generative AI model – it can generate text, code, images, and sound. Other examples of generative AI are image generation tools like DALL-E or audio generators.

Pre-Trained

The ‘pre-trained’ aspect of ChatGPT is why it seems to know everything on the internet. The GPT model was trained on large swathes of data in a process called ‘unsupervised learning.’

Before ChatGPT, AI models were built with supervised learning – they were given clearly labeled inputs and outputs and taught to map one to the other. This process was pretty slow, since datasets had to be compiled by humans.

When the early GPT models were exposed to the large datasets they were trained on, they absorbed language patterns and contextual meaning from a wide variety of sources.

This is why ChatGPT is a general knowledge chatbot – it was already trained on a huge dataset before being released to the public.

Users who want to further train the GPT engine – to become specialized in certain tasks, like writing reports for your unique organization – can use techniques to customize LLMs.

Transformer

Transformers are a type of neural network architecture introduced in a 2017 paper titled "Attention is All You Need" by Vaswani et al. Before transformers, models like recurrent neural networks (RNNs) and long short-term memory (LSTM) networks were commonly used for processing sequences of text.

RNNs and LSTM networks would read text input sequentially, the same way a human would. But transformer architecture is able to process and evaluate each word in a sentence at the same time, allowing it to score some words as more relevant, even if they’re in the middle or at the end of a sentence. This is known as a self-attention mechanism.

Take the sentence: “The mouse couldn’t fit in the cage because it was too big.”

A transformer could score the word ‘mouse’ as more important than ‘cage’, and correctly identify that ‘it’ in the sentence refers to the mouse.

But a model like an RNN might interpret ‘it’ as being the cage, since it was the noun most recently processed.

The ‘transformer’ aspect allows ChatGPT to better understand context and produce more intelligent responses than its predecessors.

History of ChatGPT Models

While OpenAI produced LLMs GPT-2 and GPT-3, it wasn’t until GPT-3.5 that these models began to power ChatGPT.

GPT-3.5

Released in November of 2022, GPT-3.5 was the world’s first introduction to ChatGPT.

GPT-3.5 Turbo

The 2023 Turbo model updated improved the accuracy of ChatGPT’s responses, although it used a similar model to 3.5.

GPT-4

March of 2023 saw the release of a more advanced model. Compared to GPT-3, GPT-4 was more powerful and better optimized. It also introduced ChatGPT Plus to paying users.

GPT-4 Turbo

Released in November 2023, OpenAI launched a version of GPT-4 that included a far larger context window than its predecessor.

GPT-4o

GPT-4o was released in May 2024, the first truly multimodal LLM from OpenAI. The ‘o’ stood for ‘omni’, a nod to the model’s ability to analyze and generate text, images, and sound.

Notably, the 4o model was twice as fast and half the cost of GPT-4 Turbo, and was made available to all ChatGPT users (with a usage limit).

GPT-4o Mini

The Mini version of GPT-4o was released in July the same year. Its API costs were even lower than the original 4o model, and it replaced GPT-3.5 Turbo as the standard model for ChatGPT users.

OpenAI o1-preview

The latest release from OpenAI was the new o1 series, debuted September 12, 2024 after a highly-anticipated launch lead-up.

The preview model became immediately available on ChatGPT, albeit with low usage limits.

The o1 models are the first LLMs that claim to reason. If the o1 model is given a prompt, it won’t answer immediately – hence the long wait time.

Instead, it will reason through each of the steps, carefully considering each piece of information and its implications before deciding on the next course of action. It won't provide an answer until it has thought through the entire series of steps required.

OpenAI o1-mini

The o1-mini is smaller than o1-preview and 80% cheaper. It’s built for everyday tasks that require advanced reasoning, like coding or mathematics.

GPT-5

Users are unsure whether the latest o1 launch is the replacement or the predecessor for the long-awaited GPT-5 model. It may be until the next OpenAI launch that they receive confirmation.

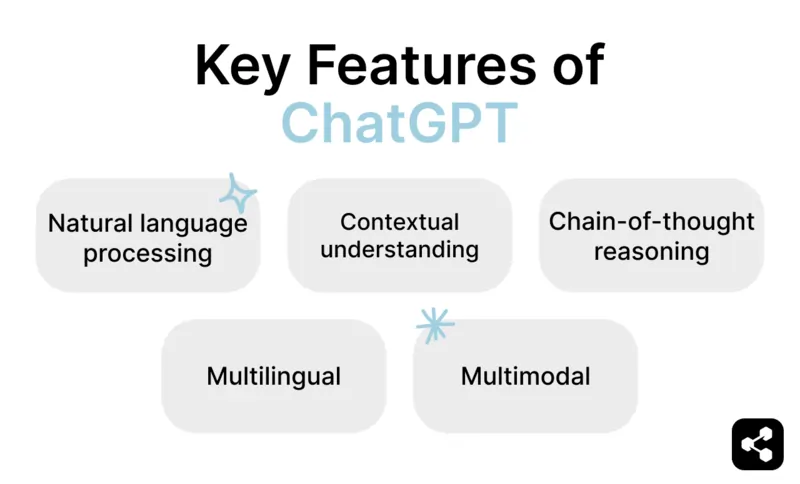

Key Features of ChatGPT

Natural language processing

Natural language processing (NLP) is a branch of AI that focuses on the natural language interactions between machines and humans.

NLP aims to enable machines to interpret and respond to human language in a way that is meaningful and useful. Under the broad umbrella of NLP is natural language understanding (NLU) and natural language generation (NLG).

NLP is what enables ChatGPT to process, understand, and generate human-like responses. It involves recognizing patterns, sentiment analysis, translation, and contextual understanding.

Multilingual

While most LLMs are multilingual – the nature of unsupervised training – few offer the vast language support seen in ChatGPT.

ChatGPT can process and respond in most languages, including coding languages.

ChatGPT can be used in over 80 languages so far, a number leagues ahead of its competitors. The full list of languages supported by ChatGPT includes Kyrgyz, Min Nan, Oriya, Sindhi, Irish, Bashkir and Chhattisgarhi.

Multimodal

Since the 4o model, ChatGPT has been solidly multimodal. You can upload an image of a pile of items and ask it to locate your keys within the photo. You can ask it to read you a bedtime story.

Its multimodality comes from the integration of specialized models for handling different types of data. The core language model (the transformation architecture) is extended by adding vision models that can process image inputs.

These vision models use convolutional neural networks (CNNs) or similar architectures to extract features from images, turning visual data into numerical representations (embeddings) that the transformer can understand.

Contextual understanding

When you have a conversation with ChatGPT, it tracks and references past information throughout the session (and beyond). This capability comes from multiple features, including the transformer architecture’s self-attention mechanism.

Its contextual understanding means it can remember previous questions and preferences, which leads to more dynamic, human-like conversations.

Chain-of-thought reasoning

The new OpenAI o1 models use chain-of-thought reasoning, a longer and more thorough way of breaking down requests.

If the o1 model is given a prompt, it won’t answer immediately – which is why it takes so long to respond.

Instead, it will reason through each of the steps, carefully considering each piece of information and its implications before deciding on the next course of action. It won't provide an answer until it has thought through the entire series of steps required.

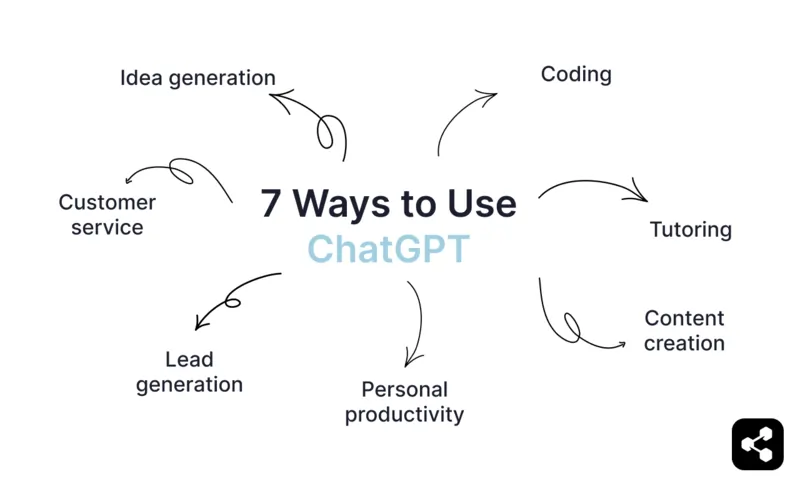

7 Ways to Use ChatGPT

1) Idea generation

Need a catchy slogan? What about ideas to increase your use of AI in your sales funnel? ChatGPT can help you brainstorm any organizational or personal task.

From marketing strategies to AI lead generation strategies, an AI chatbot is a great place to get started. Even if you don’t think ChatGPT can knock it out of the park in a home run, it can at least help you do it yourself.

2) Coding

ChatGPT can assist with generating code, explaining programming concepts, and debugging issues.

It supports multiple languages and frameworks, allowing you to write functions, solve algorithmic problems, or troubleshoot errors. Both experienced developers and beginners can use it as a tool while coding.

3) Customer service

One of the most common organizational applications of GPT is customer service. But this application, as you can guess, requires some tweaking.

Building a custom AI chatbot or AI agent with GPT is relatively easy with AI chatbot platforms.

Botpress users have been able to use GPT chatbots to significantly reduce the cost of their operations while improving customer support – one telehealth service reduced 65% of their support tickets with 0 hallucinations.

4) Tutoring

ChatGPT can serve as a personal tutor, helping you understand complex topics in subjects like math, science, history, or language.

It can break down concepts, provide examples, and answer questions interactively.

However, in order to get accurate information from ChatGPT, this task is best suited to information that was widely available online before the model’s information cut-off date. Ask about how a country’s electoral system works, not the latest political election news.

5) Content creation

One of the most popular requests of ChatGPT is generating content – from blog posts to Facebook status updates to HR emails to rhyming poems for your friend’s birthday, it does it all.

You can ask ChatGPT to generate a full content piece, ask it for inspiration, or co-draft an output, by feeding the chatbot bits and asking it to finish the task. And good news: you’re not beholden to copyright laws when using ChatGPT-generated content.

Next time you need to send a polite email to your annoying co-worker, feed your frustrated draft into ChatGPT and ask it to refine for a more positive tone.

6) Personal productivity

One of the most overlooked uses of ChatGPT is everyday productivity tasks.

You can ask ChatGPT to prioritize your to-do list, suggest strategies for focusing on your work, or for a meal plan based on your dietary restrictions. It can draft emails, suggest an optimized schedule, and suggest coping mechanisms, similar to a therapist.

7) Lead generation

Another common external use case for ChatGPT and the GPT engine is AI lead generation. More and more companies are building AI chatbots to interact with website visitors or potential leads.

These kinds of AI chatbots are often deployed on websites or channels like WhatsApp or Facebook Messenger. Sometimes they outbound, and sometimes they act as a lead magnet, like a chatbot that dispenses free information to potential leads.

Data Privacy

Unfamiliar with LLMs, many of ChatGPT’s early users were unsure how much of their data was being saved – or how it was being used – by OpenAI.

Here are a few of the most common ChatGPT data privacy questions:

Does ChatGPT save its users’ data?

Yes, ChatGPT and OpenAI may collect:

- All text input to ChatGPT (e.g. prompts, questions)

- Geolocation data

- Commercial information (e.g. transaction history)

- Contact details

- Device and browser cookies

- Log data (e.g. IP address)

- Account information (e.g. name, email, and contact information)

Does ChatGPT sell data?

No, ChatGPT doesn’t sell your data. ChatGPT doesn’t share user data with third parties without consent. The data collected is used only to improve the chatbot's performance and provide a better user experience.

How do I delete my ChatGPT data?

You can delete the data stored by ChatGPT by deleting your account. OpenAI will delete all of your data within 30 days.

But bear in mind: if you want to create a new account, you’ll need to do so with a new email address. You cannot delete your account and then open a new account with the same email.

You can still use ChatGPT without an account, but it will only support one conversation at a time.

Build your own ChatGPT chatbots

ChatGPT is a generalist chatbot, but you can use the powerful GPT engine from OpenAI to build your own custom AI chatbot.

Harness the power of the latest LLMs with your own custom chatbot.

Botpress is a flexible and endlessly extendable AI chatbot platform. It allows users to build any type of AI agent or chatbot for any use case.

Integrate your chatbot to any platform or channel, or choose from our pre-built integration library. Get started with tutorials from the Botpress YouTube channel or with free courses from Botpress Academy.

Start building today. It’s free.

FAQs

1. What is a “context window” and why does it matter?

A context window is how much text the model can “remember” at once, like its short-term memory. A bigger window means it can handle longer conversations or documents without losing track.

2. Can I train a GPT model with my own company data without exposing it to OpenAI?

Yes, you can fine-tune or augment GPT models with your own data using private infrastructure or third-party platforms that don’t send data back to OpenAI. Just make sure to check each provider’s data privacy terms.

3. What hardware or cloud services are required to host and run a large language model like GPT privately?

Running a large model like GPT typically requires high-end GPUs (like NVIDIA A100s) and lots of RAM, or you can use cloud services like AWS, Azure, or GCP that offer LLM hosting options.

4. Can ChatGPT remember past conversations across multiple sessions?

By default, ChatGPT doesn’t remember conversations between sessions unless you use tools like memory features or external integrations that store chat history.

5. How can I integrate ChatGPT into my existing website or app?

You can use the OpenAI API or chatbot platforms like Botpress to connect ChatGPT to your site or app, with full control over how it interacts with users.